Description

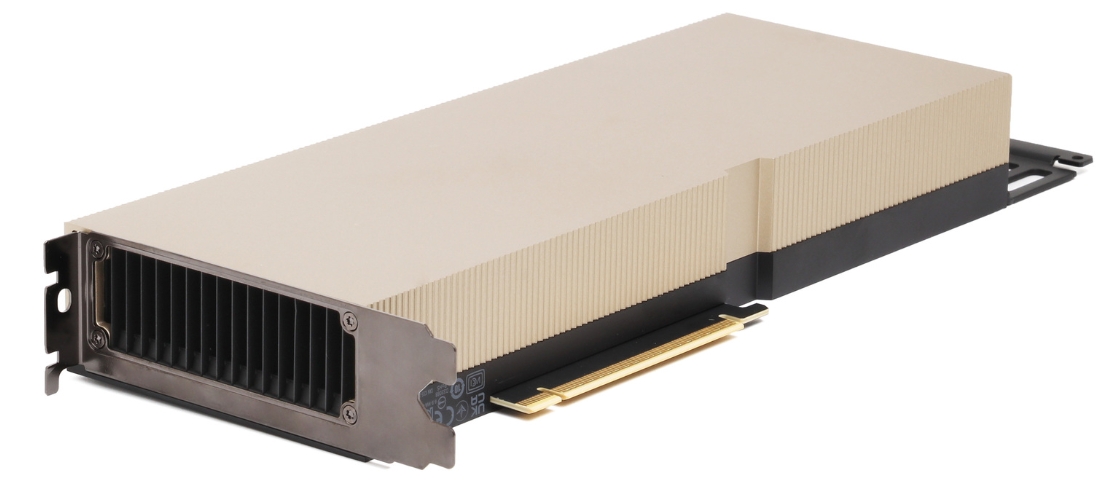

* NVIDIA H100 GPU –

* Category * Specification *

*——————————————————————–*

* GPU Architecture * Hopper (GH100) *

* Process Node * TSMC 4N (custom 4nm) *

* CUDA Cores * 14,592 (FP32) *

* Tensor Cores * 456 (4th Gen) *

* RT Cores * None (focused on AI/HPC, not graphics) *

* FP64 Performance * 60 TFLOPS *

* FP32 Performance * 60 TFLOPS *

* TF32 Performance * 1,000 TFLOPS (Tensor Core-accelerated) *

* FP8 Performance * 2,000 TFLOPS (with Hopper FP8 Transformer Engine) *

* Memory (VRAM) * 80GB HBM3 or 94GB HBM3 (H100 NVL) *

* Memory Bandwidth * 3 TB/s (HBM3) *

* NVLink Bandwidth * 900 GB/s (4th Gen NVLink) *

* PCIe Support * PCIe 5.0 x16 *

* TDP (Power) * 700W (SXM5) / 350W (PCIe) *

* Form Factors * SXM5 (DGX/HGX) / PCIe 5.0 (workstations) *

* Multi-GPU Scaling * NVLink & NVSwitch for DGX H100 SuperPODs *

—

* Key Innovations

1. Hopper FP8 Transformer Engine

– Doubles AI training/inference speed for LLMs (vs. A100 FP16).

– Automatic FP8/FP16 conversion for models like GPT-4.

- HBM3 Memory

– 3 TB/s bandwidth (2x A100) for data-intensive workloads. - Dynamic Programming (DPX)

– Accelerates algorithms (e.g., genomics, robotics pathfinding). - Confidential Computing

– Hardware-based encryption for secure AI in the cloud. - MIG (Multi-Instance GPU)

– Partition into 7x isolated GPUs (e.g., 7x 10GB instances).

—

* Performance vs. A100

– 6x faster AI training (FP8 Transformer Engine).

– 3x faster HPC (FP64).

– 2x memory bandwidth (HBM3 vs. HBM2e).

—

* Use Cases

✔ Large Language Models (LLMs) – GPT-4, ChatGPT-scale training

✔ Scientific Computing – Climate modeling, quantum simulation

✔ Edge AI – Real-time inference for autonomous systems

✔ Cloud AI – AWS/Azure H100 instances for generative AI

—

* H100 Variants

– H100 PCIe (350W) – For servers/workstations.

– H100 SXM5 (700W) – For DGX/HGX supercomputing.

– H100 NVL (94GB VRAM) – Dual-GPU card for massive LLMs.

The H100 is the engine behind modern AI breakthroughs , powering next-gen datacenters and supercomputers.

The A100 remains a workhorse for datacenters , powering everything from large language models to supercomputing clusters.

Reviews

There are no reviews yet.